Hallo teman-teman semuanya!

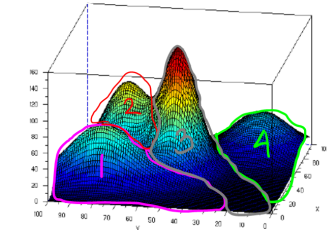

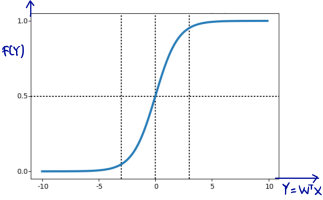

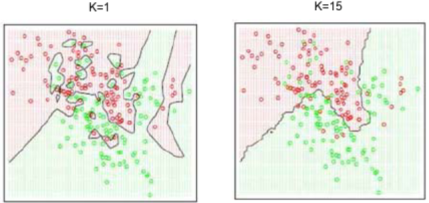

Di post ini, saya akan membagikan video series yang akan membahas materi image processing dan computer vision. Video series ini akan membahas detail teorinya, kemudian di sesi berikutnya diikuti dengan praktek implementasi di pemrograman C++ menggunakan libary OpenCV.

Materi diadopsi dari bukunya Gonzales “Digital Image Processing” dari Chapter 1 hingga Chapter 12, yaitu Object Detection, ditambah dengan beberapa topik computer vision, seperti stereo vision. Adapun slide dan code-nya tersedia di link Github di sini. Slide dan code tersebut dapat digunakan secara gratis, baik untuk referensi perkuliahan hingga untuk self-study, dengan tetap mencantumkan sumbernya.

Semoga video series ini membantu teman-teman dalam memahami image processing dan computer vision, dan ikut membantu tumbuhnya iklim science & engineering di Indonesia. Salam 🙂

Continue reading “Image Processing & Computer Vision Video Series”